Next week sees the launch of new research into hybrid working, a new report commissioned by the National Digital Leadership Network in Ireland. Global Trends Towards Flexible, Hybrid Working and its Impact for Digital Leadership in Higher Education This strategic report will focus on the global trend towards flexible, hybrid working and its impact for Digital Leadership in Higher Education in Ireland and beyond. We examine the wider implications of hybrid learning and working for…

Category: Policy

I am really looking forward to speaking an next week’s World of Learning Summit. In preparation for the session and to offer an insight into what my session will be about, I contributed an article to the Learning Magazine: you can read the article freely online. My session at the summit is on Wednesday, and focused on offering an interactive discussion forum for leaders. We’ll explore hybrid working recruitment and retention, new trends in hybrid…

Today is a big day! Together with colleagues from all across the UK, I am heading to London. It’s the Week of VocTech, and the annual showcase of everything that’s happening across the sector. I feel fortunate to be continuing my work as Strategic Lead for the partnership between ALT and the Ufi VocTech Trust even though I stepped down as ALT’s CEO earlier this year. There’re a couple of reasons why: from a personal…

This is the second post about my current work on researching alternative ways of measuring impact in Learning Technology. Go back to the first post in which I have set out the context of my work and what I am particularly focused on. Alongside the practical work with the ALT Journal Strategic Working Group, I am pleased that my proposal of a short session ‘The quality of metrics matters: how we measure the impact of research…

If you have been following the reporting on the gender pay gap in the UK, then this has been a sobering week indeed. You can search for the reports from different employers here. I have had a look through many of the education providers and sector bodies that I work with and the scale of the ‘gaps’ highlighted in some of the reports is staggering. Not a surprise, given my day to day experience of…

Last year I worked on finding a sustainable new home for the Open Access journal Research in Learning Technology. As part of my work for ALT, this was the third transition I have worked on since 2008 and during this period I have contributed to the thinking around Open Access publishing in Learning Technology, often through ALT’s contribution to initiatives such as the 2012/3 ‘Gold Open Access Project‘. This year I will be working with…

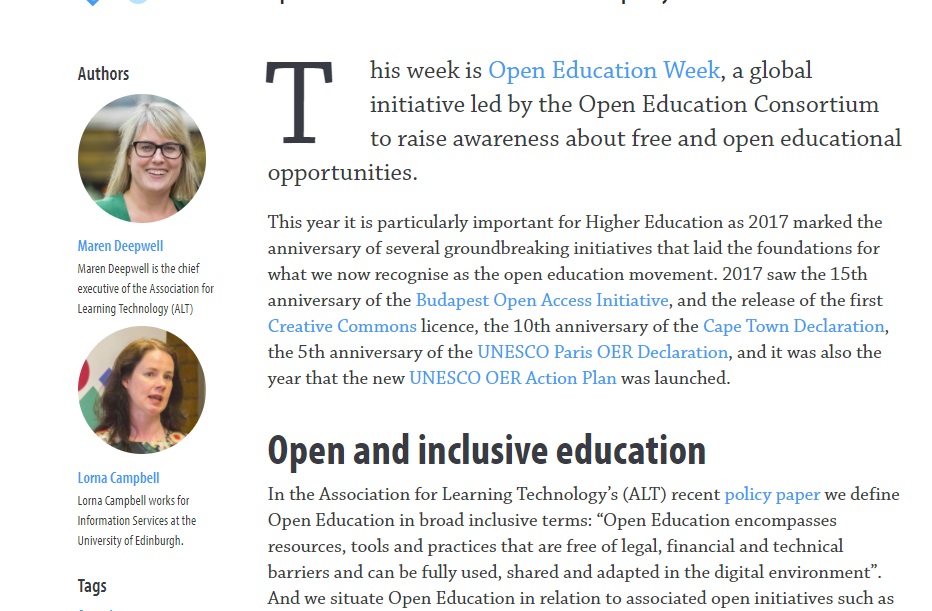

I gratefully acknowledge the work of Lorna Campbell together with whom I wrote this article, and to David Kernohan for his editorial input. Read the full article on Wonke. This week is Open Education Week, a global initiative led by the Open Education Consortium to raise awareness about free and open educational opportunities. This year it is particularly important for Higher Education as 2017 marked the anniversary of several groundbreaking initiatives that laid the foundations for…

I have been working on some articles about effective education policy this week and that prompted me to look back at Pasi Sahlberg’s contribution (slides available here) to the Opening Plenary at last December’s OEB conference. It was an inspiring 20 min or so that combined hard hitting policy insight with a global perspective from the Finnish expert and culminated in a sing-a-long that makes the YouTube video worth watching! In his talk there was…

It’s been nearly a year since I wrote my first post on leadership as an open practice, inspired by the 2015 OER conference. So in this post I want to reflect on how my experiment is going, what progress I have made and what’s next. Where it all began… In April last year, I wrote : “I’d like to try and adopt open practice in my role and connect with others who do the same.…

It’s #OpenEducationWk and I’ve been inspired by activities and blog posts from across the community, including a special edition of the #LTHEchat (helpful intro here) and a number of webinars organised by the ALT Open Education Special Interest Group (including a preview today of the OER16 Open Culture conference coming up in April). Seeing so much commitment to and enthusiasm for scaling up open practice and resources has been a joy – but it’s also…